AI In The Office: Can We Build A Future Where Trust Thrives?

Transparency and ethics hold the key to a collaborative Man and Machine workforce.

Is AI losing our trust? The jury's out, but cracks are showing. There’s even some research out there now (scroll down to read the study) that says people are losing trust in AI. Opaque algorithms fuel anxieties about bias and discrimination. "Deepfakes" blur the line between truth and fiction. We marvel at AI's power, but fear its potential misuse at the workplace, at home etc. Trust requires transparency: shedding light on AI's inner workings and ensuring fairness in its decisions. The future of AI hinges on a delicate dance – harnessing its potential while respecting human values.

But before going ahead, let’s take a look at the AI Jobs Indicator

NEW: You can search this newsletter’s website using our AI-powered chatbot. Search for answers to questions on AI and employment. Go ahead. Try it!

In Today’s Newsletter:

China Surpasses US in Producing Leading AI Talent, Now Home to Nearly Half of World's Top Researchers

This AI Model Can Predict Workplace Accidents Before They Happen

Ongoing Tech Layoffs Persist as AI Slows Reemployment

Steps Employers Should Take Before Using AI for Hiring

Google.org Launches $20 Million Program to Support Nonprofits Harnessing Gen-AI

After Devin, Here Comes Devika

MIT's New Working Group On AI

Indeed’s New AI Tools

Center for AI Safety Launches SafeBench Competition to Advance AI System Assessment

US Artists Say Stop Unauthorized Use Of Their Music

Elon Musk Discusses AI Risks at Abundance Summit

US and UK Join Forces for AI Safety: A Landmark Deal Analyzed

Starter Package For AI Ethics In Journalism

Is This US Ban A Setback For AI Agents?

Google's DeepMind Study Reveals AI System Outperforms Humans in Fact-Checking

French Commission Proposes AI Action Plan

PwC Middle East Teams Up with Microsoft to Establish AI Excellence Center in Saudi Arabia

How AI Is Reshaping Industry In UAE And Saudi Arabia

Meta Researchers Suggest AI Requires a "Body" for Genuine Intelligence

2024 Mozilla Senior Fellowship

Apple Unveils ReALM

….plus, TopPicks, Demo Day Events and more….

“AI For Real Demo Day” Goes “Live”

As promised in last week’s newsletter, “AI For Real Demo Day” has gone “live”.

Are you developing groundbreaking AI solutions with real-world applications? Then, "AI For Real Demo Day" is your chance to demonstrate your labor of love.

Present your AI product or service to a community of potential users. Tell 'em how it actually works.

Register early to avail of early bird offers!!!!* (This is a fee-based service).

(*T&Cs apply).

“AI For Real” Is Creating Waves. Join The Community Now

“AI For Real” is now a vibrant, fast-growing online global universe. We are a community catering to the average Joe and Jane, not just AI experts and practitioners.

We delve deep into the latest AI breakthroughs, making them accessible and pertinent to your everyday life. Our focus extends beyond just sharing AI advancements; we explore their impact on common aspects like employment and ethical dilemmas. Stay abreast of cutting-edge research by subscribing to our services.

We offer many services, including “Learn AI for real”, “AI For Real Demo Day”, newsletters, articles, etc. To stay in the loop and get updates, subscribe to all or any of our services.

Join us on this journey of discovery and exploration as we uncover the deep influence of AI on our daily lives.

Our motto - Breaking Down AI For Everyone

The Ethical Buck Stops With Us: Why AI Isn't to Blame

Let's talk about workplace ethics in the age of AI. Here's the uncomfortable truth: ethics aren't some magical download from a machine. Bias, ethics, etc are human traits, and the responsibility for all of it lies squarely with us. Algorithms can be biased, sure, but bias flows through humans. We build in bias, we code it, often unconsciously.

Think about it. Before the rise of fancy AI recruitment tools, were workplaces bastions of fairness? Did unconscious bias magically disappear? Of course not. We've always grappled with issues of discrimination and prejudice. AI, for all its complexity, is just a mirror reflecting a very human problem.

So, why the sudden outcry about ethics now? Because AI exposes our flaws with brutal efficiency. It forces us to confront biases baked into our systems. It's a wake-up call, not an excuse.

The solution? We need to become the ethical architects of our workplaces. We need to actively root out bias in ourselves and our processes, human and algorithmic alike. Let's hold ourselves accountable, not the machines that amplify our shortcomings. The future of work isn't about AI ethics, it's about human ethics – and that's a conversation long overdue.

What do you have to say about this? Write in the “Comments” section.

China Surpasses US in Producing Leading AI Talent, Now Home to Nearly Half of World's Top Researchers

Recent findings from MacroPolo reveal a remarkable shift in the global AI landscape, with China emerging as the dominant force in cultivating top-tier AI specialists. The research indicates that China now hosts close to half of the world's foremost AI researchers, a substantial increase from just three years ago when it accounted for one-third.

This surge in Chinese AI prowess is attributed to substantial investments in AI education and a reversal of the trend where Chinese scholars opted to remain in the U.S. post-education. Despite this shift, the US continues to maintain its numbers in AI talent. However, it notably benefits from Chinese-born researchers who contribute significantly to American tech and academia.

What It Means For Us

The findings underscore the increasingly global nature of AI competition and innovation, emphasizing the pivotal role China plays in shaping the future of artificial intelligence.

Source: macropolo.org

Exciting Opportunities for Newsletter Sponsorship!

Discover available ad and sponsorship slots for this newsletter by clicking on this link. Explore the various packages to find the perfect fit for your brand.

If you would like further information, please don't hesitate to contact me. Let's collaborate and customize the best deal tailored to your specific needs. Click on the tab above. Or, for individual requirements, write to marketing (at) newagecontentservices.com

This AI Model Can Predict Workplace Accidents Before They Happen

“Buddywise” has developed computer vision models to detect potential workplace hazards and send emergency alerts in the event of an injury or death, aiming for a "zero-injury future" in workplaces across Europe.

The AI platform analyzes real-time images from workplace cameras to identify risks such as reckless forklift operation, slips and falls, and non-compliance with safety gear, while ensuring data anonymity and customization of alert types.

With €3.5mn in seed funding, Buddywise plans to expand its team, attract top tech talent, and grow its customer base in European industrial sectors, already having adoption in Sweden, Finland, Latvia, and Poland.

Source: thenextweb.com

Ongoing Tech Layoffs Persist as AI Slows Reemployment

The technology sector has seen a significant number of layoffs despite the presence of open positions, raising concerns about employment in the industry.

The analysis discusses the challenges in employment within the technology sector, with over 310,000 layoffs from 200 tech companies in the past two years.

AI-based hiring tools may be contributing to the difficulty in finding technology jobs by screening out qualified candidates.

The collaboration between Apple and Google on AI and the reported unhappiness of people under 30 are also topics of discussion in the technology roundup.

According to this article, while open positions exist, it is becoming increasingly difficult to find work, partly due to AI-based hiring tools that may screen out qualified candidates. The conversation also touches on the collaboration between Apple and Google in the field of AI, and explores the issue of unhappiness among people under 30.

Source: computerworld.com

Steps Employers Should Take Before Using AI for Hiring

The article provides a comprehensive guide for employers on implementing AI tools in the workplace, particularly for hiring and recruiting practices.

It emphasizes the importance of assessing use cases, reviewing applicable laws, asking questions of AI vendors, developing an implementation plan, and establishing a system to monitor outcomes.

Source: reuters.com

This newsletter “All About Content…And AI” is witness to the happenings in the world of content and digital marketing at the intersection of technology…more specifically, AI.

Google.org Launches $20 Million Program to Support Nonprofits Harnessing Gen-AI

Google.org, the philanthropic arm of Google, has unveiled a groundbreaking initiative aimed at bolstering nonprofits engaged in developing technology powered by gen-AI. The newly introduced program, dubbed "Google.org Accelerator: Generative AI," is set to receive $20 million in grants and will initially involve 21 nonprofit organizations.

Among the inaugural participants are notable entities such as Quill.org, which specializes in AI-driven tools for providing feedback on student writing, and the World Bank, which is pioneering a generative AI application to enhance accessibility to development research.

In addition to financial support, nonprofits enrolled in the six-month accelerator program will benefit from a comprehensive package of resources. This includes access to technical training sessions, workshops, mentorship opportunities, and guidance from dedicated "AI coaches."

Furthermore, Google.org's fellowship program will see teams of Google employees collaborating full-time with three selected nonprofits - Tarjimly, Benefits Data Trust, and mRelief - for up to six months. Their objective will be to provide extensive support in the launch and implementation of the proposed generative AI tools.

What It Means For Us

The initiative marks a significant step forward in fostering collaboration between technology giants and nonprofit organizations, highlighting the potential of generative AI to address pressing societal challenges. Through this initiative, Google.org aims to catalyze innovation and empower nonprofits to harness the transformative capabilities of AI for social good.

Source: techcrunch.com

After Devin, Here Comes Devika

First, there was Devin. Now comes Devika. She is an open-source AI engineer developed in India that aims to rival the capabilities of the US-developed AI software engineer, Devin, by leveraging machine learning and natural language processing to understand and autonomously execute human instructions for coding tasks.

Devika positions itself as a collaborative partner for developers, offering a transparent and community-involved alternative to Devin, with the potential to impact the future of coding and software development.

The launch of Devika contributes to the global race for AI innovation in software development, with its creator, Mufeed VH, extending an invitation to early testers and contributors for the project, which boasts features such as agentic models and the ability to deploy static websites.

Source: ndtv.com

Indeed’s New AI Tools

Indeed has launched AI-powered tools, Smart Sourcing and Indeed Profile, to streamline job matching and hiring for employers and job seekers.

Source: pymnts.com

MIT's New Working Group On AI

MIT has launched a working group to explore the impact of generative AI on the workforce.

The group aims to understand how these tools are being used, ensure responsible usage, and assess the impact on jobs and skills. It will conduct research, act as a platform for sharing experiences, and develop training resources.

Companies like Google and IBM are involved in the initiative, reflecting a broad interest in understanding and harnessing the potential of generative AI.

The MIT team driving this initiative is a multidisciplinary and multi-talented group including Senior Fellow Carey Goldberg and Work of the Future graduate fellows Sabiyyah Ali, Shakked Noy, Prerna Ravi, Azfar Sulaiman, Leandra Tejedor, Felix Wang, and Whitney Zhang.

Source: news.mit.edu

Center for AI Safety Launches SafeBench Competition to Advance AI System Assessment

The Center for AI Safety has unveiled SafeBench, a pioneering competition designed to incentivize the creation of new benchmarks for evaluating the safety of AI systems. With prizes totaling $250,000, participants stand a chance to win substantial awards, including five prizes of $20,000 and three prizes of $50,000 for top-performing benchmarks.

SafeBench focuses on developing benchmarks to assess various critical properties of AI systems, including robustness, monitoring capabilities, alignment, and suitability for safety applications. Organizers cite examples of past winning benchmarks such as TruthfulQA, MACHIAVELLI, and HarmBench as inspiration for participants.

The competition is currently open for submissions, with a deadline set for February 25, 2025. Winners will be announced in April 2025. A distinguished panel of judges, drawn from the Center for AI Safety, the University of Chicago, AI2025, and Carnegie Mellon University, will evaluate the submissions.

What It Means For Us

This initiative holds significant importance as it addresses the pressing need for standardized methods to measure and benchmark AI system safety. With regulatory bodies worldwide increasingly emphasizing the importance of such assessments, competitions like SafeBench play a crucial role in advancing this essential, albeit complex, endeavor.

Source: mlsafety.org

AI For Real is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.

US Artists Say Stop Unauthorized Use Of Their Music

A diverse group of artists and songwriters are uniting to urge politicians to prioritize artist rights and stop unauthorized use of their music. They include Mick Jagger & Keith Richards, Steven Tyler & Joe Perry, Linkin Park, Sia, Regina Spektor, R.E.M, Lorde, Rosanne Cash, Blondie, and Elvis.

Source: artistrightnow.medium.com

Elon Musk Discusses AI Risks at Abundance Summit

During the recent Abundance Summit, Elon Musk engaged in “The Great AI Debate,” highlighting the potential dangers of artificial intelligence (AI). Musk estimated a 10 to 20% chance that AI could pose a threat to humanity. Despite this, he emphasized the greater likelihood of AI’s benefits outweighing its risks.

Musk, who has consistently voiced concerns about AI, reiterated the importance of regulation and responsible development. He founded xAI as a counterpoint to OpenAI, advocating for an AI that is truth-seeking and honest. By 2030, Musk predicts AI will surpass human intelligence, urging caution and comparing AI development to nurturing a child with god-like intellect.

What It Means For Us

Musk’s stance is clear: honesty is paramount in AI development to ensure safety for both AI and humans. Musk’s insights at the summit underscore the need for transparency and integrity in the rapidly evolving field of AI.

Source: pinkvilla.com

US and UK Join Forces for AI Safety: A Landmark Deal Analyzed

In a significant development, the US and UK have signed a first-of-its-kind agreement to collaborate on safety testing for advanced artificial intelligence. This deal signifies a growing global recognition of the potential risks posed by powerful AI and a commitment to proactive mitigation.What It Means For Us

Safer AI Development: This collaboration aims to establish robust methods for evaluating AI safety.By sharing expertise and resources, both countries can accelerate the development of reliable AI testing procedures.

Global Leadership: The US and UK are taking a leading role in setting standards for safe AI development.This could influence other countries to adopt similar approaches.

Focus on Transparency: The agreement includes sharing information about AI capabilities and risks. This transparency is crucial for building public trust in AI and ensuring responsible development.

Challenges Ahead:

Standardization: Developing a unified approach to AI safety testing across different AI systems and applications will be a complex task.

Regulation: While the deal focuses on testing, questions remain about potential regulations and how they might be implemented.

Private Sector Involvement: The agreement mentions evaluating private sector AI models. Encouraging collaboration and information sharing from private companies will be essential.

Overall, this US-UK deal is a positive step towards ensuring the safe and responsible development of advanced AI. However, significant challenges remain in creating a comprehensive framework for global AI governance.

Source: BBC

- Your story matters. Your innovation matters -

If you are an AI entrepreneur*, startup founder, etc, and are eager to say your piece in under a minute, drop in an email with the subject line: “Yes, to 60 secs” to marketing (at) newagecontentservices.com We promise to revert ASAP.

(*T&Cs apply)

Starter Package For AI Ethics In Journalism

Poynter has emphasized the need for newsrooms to adopt an ethics policy for using generative artificial intelligence (AI).

Towards this, it has provided a starter package, collaboratively crafted by Poynter's Alex Mahadevan, Tony Elkins, and Kelly McBride. It serves as a manifesto of journalistic principles, anchoring AI exploration in the bedrock of accuracy, transparency, and audience confidence.

The policy includes specific guidelines for AI use in audience-facing, business, and back-end reporting contexts. It also emphasizes the importance of creating an AI committee and designating a leader to ensure ongoing oversight and communication. What It Means For Us

An AI committee and designated leader are crucial for ongoing oversight and communication around AI experiments in newsrooms.

Source: Poynter

Is This US Ban A Setback For AI Agents?

It's a development that sends out a message. The US House of Representatives has banned its staff from using Microsoft's Copilot, a gen-AI assistant, due to concerns about data security and potential leaks to non-approved cloud services. The House's Chief Administrative Officer Catherine Szpindor was quoted as saying the Microsoft Copilot app had been deemed by the Office of Cybersecurity "to be a risk to users" due to the threat of leaking House data to non-House-approved cloud services.

While Microsoft has responded by saying it was working on providing AI tools that meet federal security requirements, the development certainly sends a signal down the line for other government agencies and even private organizations.

Just how safe is it to "expose" a company, an agency or an individual's private data to an AI agent? Have the companies providing such a service like Microsoft, adequately addressed this issue? This situation definitely underscores the importance of addressing data security, privacy, and transparency concerns as entities incorporate AI copilots and similar systems.

Data security is the key challenge. With AI agents learning from private data and also mimicking human behavior, there are risks of exposing sensitive information, potentially leading to data breaches. Developers should emphasize data security measures, such as encryption, secure data storage, and stringent access controls. Organizations must create clear data handling and sharing policies to ensure responsible and ethical use of AI systems.

Transparency and accountability are also essential in AI systems. Organizations should ensure AI agents can explain decision-making processes and provide clear, understandable explanations for recommendations. Mechanisms for redress when AI systems make harmful decisions are necessary, fostering transparency and accountability and building public trust.

Of course, there are other associated issues such as tackling potential biases in AI systems.

What It Means For Us

The US House of Representatives' ban on Microsoft's Copilot use highlights the security and ethical challenges in AI adoption. Those providing such AI agents need to illustrate beyond the shadow of a doubt that AI can be used for innovation and improvement with minimal potential risks and harms.

Source: india.com

Google's DeepMind Study Reveals AI System Outperforms Humans in Fact-Checking

A groundbreaking study conducted by Google's DeepMind research unit has unveiled a remarkable finding: artificial intelligence (AI) systems can surpass human fact-checkers in evaluating the accuracy of information generated by large language models.

Published under the title "Long-form factuality in large language models" on the pre-print server arXiv, the paper introduces a pioneering methodology termed Search-Augmented Factuality Evaluator (SAFE). This innovative approach demonstrates the potential of AI to revolutionize fact-checking processes, indicating a significant leap forward in the capabilities of machine learning algorithms.

The findings underscore the increasingly pivotal role of AI in addressing the challenges posed by misinformation and ensuring the accuracy of information disseminated across digital platforms. With the development of SAFE, Google's DeepMind aims to contribute to the advancement of AI-driven solutions for enhancing information integrity in the digital age.

The study also highlights the cost savings of using SAFE compared to human fact-checkers and the importance of benchmarking AI models against expert human fact-checkers. It emphasizes the need for transparency, human baselines, and rigorous, transparent benchmarking to build trust and accountability in automated fact-checking technology.

Source: venturebeat.com

French Commission Proposes AI Action Plan

In a recent development, the French government received a comprehensive report from an ad hoc Commission outlining an ambitious yet realistic action plan for the advancement of artificial intelligence in France. The report emphasizes a balanced view on AI, neither overly pessimistic nor optimistic, and suggests that AI will not lead to mass unemployment or automatic growth acceleration.

Six Pillars for Progress

The action plan is built on six pillars designed to harness AI as a force for progress and to shape the future. These pillars form the foundation for 25 pragmatic recommendations, which include iconic actions supervised by the Ministry of higher education and international collaborations.

Investing in AI’s Future

The Commission recommends an annual investment of approximately €4 billion over the next five years. This investment aims to catalyze AI integration into the economy and promote widespread understanding and training across society.

Economic and Social Benefits at Stake

The report’s authors argue that failing to act could result in significant economic and social losses, urging for immediate, collective, and long-term action to ensure France remains at the forefront of AI innovation and reaps its benefits.

Source: campusfrance.org

PwC Middle East Teams Up with Microsoft to Establish AI Excellence Center in Saudi Arabia

PwC and Microsoft are committed to developing local AI talent through the launch of an AI Centre of Excellence in Riyadh, aiming to recruit top talent from leading universities in the Kingdom. The initiative is part of Saudi Vision 2030 and is designed to drive economic growth and position the nation as a leader in knowledge and technological innovation.

The Middle East is expected to accrue 2% of the total global benefits of AI in 2030, equivalent to 320 billion USD.

Saudi Arabia is expected to gain over 135.2 billion USD from AI in 2030, leading to the launch of an artificial intelligence Centre of Excellence in Riyadh.

Source: cio.com

“AI For Real Demo Day Is “Live”

Here's The 1st Solution To Be Showcased:

🚀 Introducing "Coral AI": The Document Summarizer 🚀

📚 Explore limitless possibilities with Coral AI:

Seamlessly upload any PDF – textbooks, contracts, financial documents, and more. Gain deeper insights by asking questions about your document. Search effortlessly using full sentences, not just keywords.Break language barriers with translation in 90+ languages.

Watch how to actually use Coral AI* to create an "outline" from any of your documents. The demo is now 'Live' on our Discord Channel.

(*special discount for AI For Real community members)

How AI Is Reshaping Industry In UAE And Saudi Arabia

The AI revolution is rapidly reshaping industries in the Kingdom of Saudi Arabia (KSA) and the United Arab Emirates (UAE), with 87% of companies planning to invest in artificial intelligence(AI) and machine learning within the next year.

According to a study, the workforce in the KSA and UAE face challenges in adopting smart manufacturing technologies, with a lack of skilled workers being the biggest barrier. So, companies there are focused on addressing technology challenges and the skills gap through increased automation and organizational change management.

The 9th annual study "The State of Smart Manufacturing" by Rockwell Automation reveals that AI is revolutionizing the manufacturing industry in Saudi Arabia and the UAE. A majority of the companies in the region have invested or plan to invest in AI within the next year, with applications including quality control, cybersecurity, robotics, and supply chain management.

Companies are focusing on international expansion, considering climate change as a significant obstacle to growth, and investing in technology to drive efficiency and competitiveness. What It Means For Us

As "AI For Real" had reported earlier, looks like AI is gonna be huge in the Middle East.

Source: processandcontroltoday.com

Meta Researchers Suggest AI Requires a "Body" for Genuine Intelligence

The article discusses the embodiment hypothesis, which suggests that true intelligence can only emerge in AI if it has a physical body to interact with the world. It explores the use of simulations to train embodied AI before deploying them into the real world.

The article also highlights the limitations of simulations in fully preparing AI for real-world interactions and mentions examples of companies deploying AI-powered robots into real-world settings. It ends by speculating on the potential for the combination of advanced AI brains and state-of-the-art robot bodies to lead to human-like intelligence in artificial beings.

Source: freethink.com

2024 Mozilla Senior Fellowship

Mozilla’s Senior Fellowship Program brings together experts advancing trustworthy AI and promoting an ethical, responsible and inclusive internet environment.

The 2024 fellowship focuses on projects aligned with Mozilla's vision for a trustworthy AI ecosystem and ethical internet environment, particularly in key geographies like East and Southern Africa, Europe, Brazil, India, and the United States. Preference is given to projects addressing social justice issues and involving historically excluded communities. Applicants are invited to submit proposals for independent projects addressing current issues with consumer tech, datasets management, AI in public institutions, impact on adjacent issues, legislation and regulatory initiatives, and policy approaches for open source in AI and open innovation.

Apple Unveils ReALM: A Leap Forward in AI Context-Awareness for Voice Assistants

In a groundbreaking research paper, Apple’s team of researchers introduced ReALM, a state-of-the-art AI system engineered to gain a comprehensive understanding of on-screen activities, conversational context, and background operations.

Key Insights:

ReALM utilizes a unique method that transforms screen data into text, bypassing the need for complex image recognition parameters and leading to more streamlined AI operations on the device. The model takes into account both the content visible on the user’s screen and the ongoing tasks to gain a complete understanding of the user’s situation. As per the research paper, Apple’s larger ReALM models outperform GPT-4 significantly, despite having a smaller number of parameters.

Practical Application:

For instance, if a user is surfing a website and wishes to contact a business, they can simply tell Siri to “call the business.” Siri, powered by ReALM’s capabilities, can recognize the phone number displayed on the website and place the call directly.

What It Means For Us

ReALM signifies a major breakthrough in improving the context-awareness of voice assistants. By efficiently interpreting on-screen content and additional contextual indicators, upcoming Siri updates could provide users with a more integrated and hands-free experience. In essence, the introduction of ReALM marks a significant step in the progression of voice assistant technology, promising to deliver more intuitive and personalized interactions that meet users’ requirements with increased accuracy and efficiency.

Source: arxiv

…where every week, I shortlist interesting articles, posts, podcasts, and videos on AI.

(This is a fascinating futurist report on the issue of trust on machines. A must read.)

Trust in AI Technology and Developers Declining Globally, Reveals Edelman Data Shared with Axios

New data from Edelman, disclosed exclusively to Axios, indicates a notable decline in trust toward AI technology and the companies behind its development, both in the United States and worldwide.

The significance of this trend is underscored by the ongoing deliberations among regulators worldwide regarding the formulation of appropriate rules and regulations for the rapidly expanding AI industry.

According to Justin Westcott, Edelman's global technology chair, trust represents the cornerstone of the AI era. However, he warns that the current state of affairs reflects an alarming deficit in trust. Westcott emphasizes the necessity for companies to transcend mere technical aspects of AI and instead focus on addressing its broader societal implications, including the reasons behind its implementation and its impact on various stakeholders.

Westcott stresses that the public demands a firm commitment to safeguarding personal privacy and rigorous scrutiny of AI's societal consequences, both by scientists and ethicists. He believes that organizations prioritizing responsible AI, fostering transparent partnerships with communities and governments, and empowering users with control will not only emerge as industry leaders but also contribute to the restoration of trust in technology, which has eroded over time.

In essence, the path to rebuilding trust in AI lies in prioritizing ethical considerations, fostering transparency, and empowering users to have agency over their interactions with AI systems.

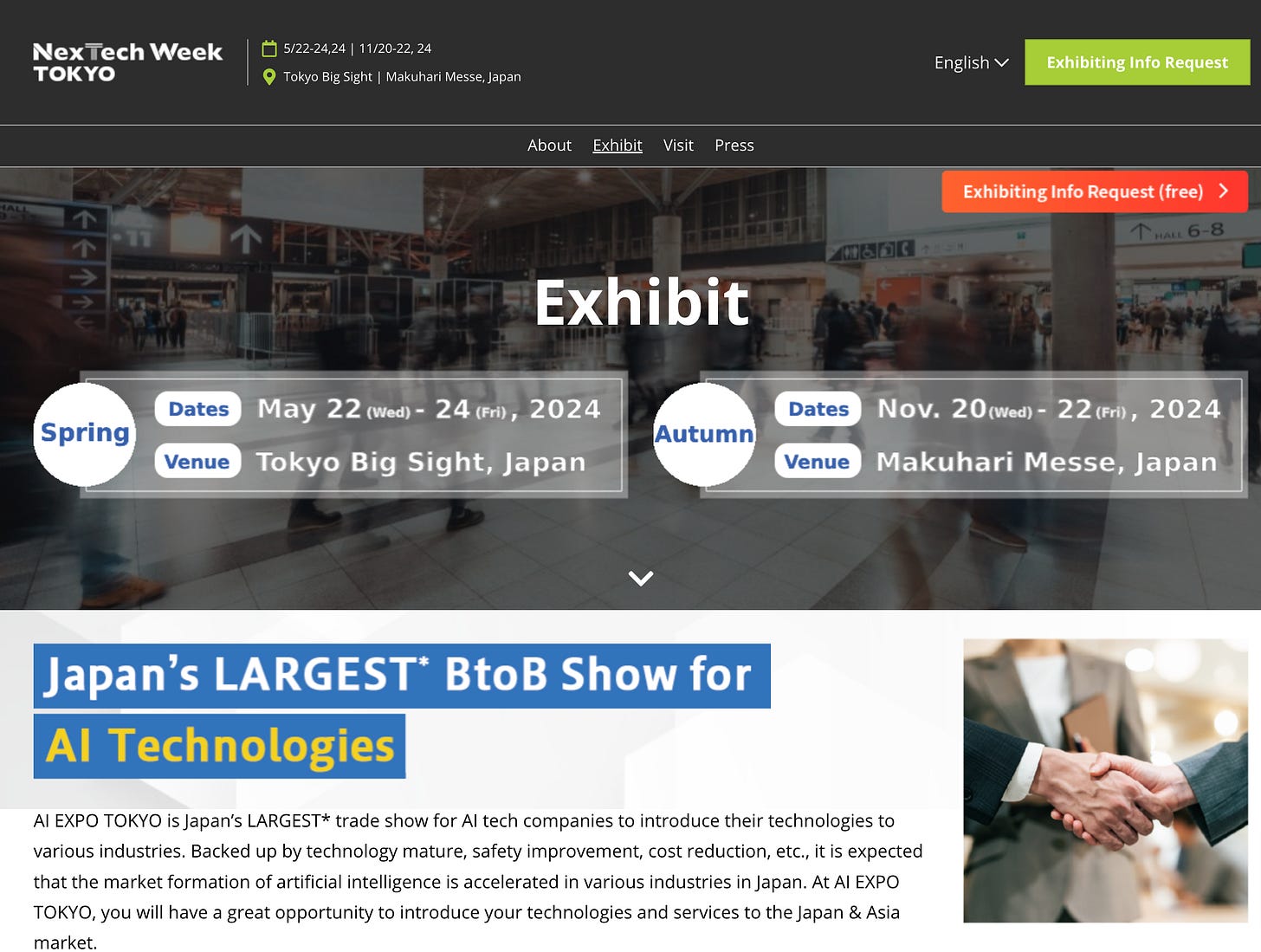

Japan’s Largest AI Tech Show

AI EXPO TOKYO is Japan’s largest trade show for AI tech companies to introduce their technologies to various industries. Backed up by technology mature, safety improvement, cost reduction, etc., it is expected that the market formation of artificial intelligence is accelerated in various industries in Japan. At AI EXPO TOKYO, you will have a great opportunity to introduce your technologies and services to the Japan & Asia market.

For details click here.

Get In Touch to Unlock the Power of AI for Your Business and Personal Growth

Are You a Brand, a Business, or an Individual Looking to Get Insights Into Artificial Intelligence?

As an expert AI communicator, I specialize in bridging the gap between complex AI technologies and practical applications for businesses and individuals. Whether you're looking to enhance your company's tech capabilities or simply curious about how AI can streamline your daily life, I offer tailored solutions and insights.

Stay ahead of the curve with my services - your guide to navigating the ever-evolving world of artificial intelligence.

Contact me today.